By Dr. Xenia Wade | The Human Side of AI at Work

74% of companies have yet to show tangible value from their AI investments.

BCG survey of 1,000 senior executives across 59 countries, 2024.

And when BCG looked at why, many of the implementation challenges weren’t about the technology. They were about people and processes.

Most organisations already know this. And yet the most common response to slow mandatory AI adoption is still the same one: add another mandate.

The problem isn’t the mandate itself. Mandates can work. The problem is what usually gets skipped alongside them: the change management, the psychological safety, the meaningful involvement of the people who are supposed to actually change how they work. When that piece is missing, you don’t get adoption. You get what I call Checkbox Adoption.

What Is Checkbox Adoption?

Checkbox Adoption is what happens when mandatory AI rollouts produce the metrics of adoption without the substance of it. Usage is logged, training is completed and dashboards look healthy. But employees have found the path of least resistance through the requirement, and nothing about how they actually work has changed.

It’s not cynicism or laziness on the part of employees. It’s a predictable human response to change that’s been announced rather than managed. When people have no meaningful involvement in a transition that directly affects their work, minimal compliance is a rational response.

Getting the tool selection right is the easier part. What comes after the announcement is where most rollouts fall apart.

Is your organisation building genuine AI capability, or Checkbox Adoption? The diagnostic measures what your dashboards can’t. -> Take the Free Diagnostic

Why Mandatory AI Adoption Backfires Without Change Management

To understand why mandates without change management produce Checkbox Adoption, it helps to understand what the research tells us about how people decide to use new technology.

The Technology Acceptance Model, known as TAM, is the most replicated framework in this space. TAM identifies perceived usefulness and ease of use as the primary drivers of adoption. But the research shows those relationships change in mandatory settings. Huang and Hsu’s study of workplace users of mandatory IT found that when employees are forced to use a new system, workload pressure, perceived control, and anxiety become far more significant predictors than usefulness or ease of use alone. You cannot assume that findings from voluntary adoption research carry over cleanly to mandatory contexts.

Cieslak and Valor’s 2024 integrative review of 63 studies on employee resistance to digital transformation, published in Cogent Business and Management, found that resistance is rarely about the technology itself. It’s driven by perceived threats to resources, job security, workflow autonomy, and professional competence. A mandate that arrives without change management doesn’t resolve those threats. It amplifies them.

The BCG finding is worth sitting with: 70% of implementation challenges in AI rollouts are people- and process-related. Not technical. And yet most organisations allocate the majority of their AI investment to the technology. The people side is treated as a communication plan, or a training module. Not as the actual work.

There’s also a significant cost to high-performing employees. These are often the people with the strongest professional identity and the clearest view of whether AI outputs are actually trustworthy. When a mandatory rollout happens without psychological safety underneath it, they don’t engage more deeply. They disengage. Not from the tool. From the whole change programme. This dynamic connects to what I explore in my article on AI Shame: the private embarrassment of not understanding a technology that others appear to have mastered. Mandatory rollouts amplify AI Shame by removing the psychological safety to admit confusion without career risk.

What the BCG Data Actually Tells Us

74% of companies haven’t generated tangible value from AI. That number is striking, but it’s not because AI doesn’t work. BCG’s report is explicit: the organisations that are seeing real returns treat people and processes as the primary investment. They follow what BCG calls a 70-20-10 approach: 70% of resources on people and processes, 20% on technology, 10% on AI algorithms.

Most mandatory AI rollouts invert that ratio entirely.

When Li, Zhu, and Hua examined what separates organisations that capture AI value from those that don’t, writing in Harvard Business Review in 2025, the conclusion was the same. Most firms struggle not because the technology fails, but because their people, processes, and organisational readiness haven’t been treated as the actual work.

The research doesn’t say mandates always fail. It says mandates produce compliance without commitment when they’re not accompanied by genuine change management. That becomes a serious problem when organisations skip adequate training, ignore employee concerns, or deploy tools without demonstrating clear value in the employee’s day-to-day work.

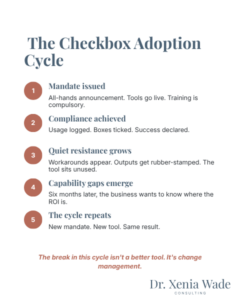

The Checkbox Adoption Cycle

The pattern is predictable.

- Mandate issued. Leadership announces a company-wide AI requirement. Tools are deployed. Training is scheduled.

- Compliance achieved. Usage metrics hit their targets. Completion certificates are filed. The rollout is declared a success.

- Quiet resistance grows. Employees find workarounds. Outputs are rubber-stamped without review, or quietly ignored. The tool becomes shelfware dressed up as productivity.

- Capability gaps emerge. Six to twelve months later, the business asks why AI isn’t generating the promised return.

- The cycle repeats. A new mandate, a new tool, a new training module. And the same result.

The break in this cycle isn’t a better tool. It’s treating the human side of the rollout as the actual work, not the afterthought.

I use the concept of Emotional Carrying Capacity to describe an organisation’s genuine ability to absorb the psychological weight of change. Mandatory AI adoption doesn’t happen in a vacuum. It lands on people already navigating uncertainty, workload pressure, and real questions about how AI affects their professional future. Rolling out a mandate without first assessing that capacity is one of the most common, and most avoidable, reasons AI adoption stalls.

What Genuine Adoption Looks Like

The mandate itself is rarely the problem. There are legitimate reasons to make AI adoption a requirement, including regulatory compliance under the EU AI Act, baseline standardisation across a business, and maintaining competitive capability. A compliance floor is appropriate.

The question is what gets built around it.

- Change management alongside the mandate. The organisations seeing genuine results treat the rollout as a change programme, not a deployment. That means proper stakeholder engagement, clear communication about why this matters for employees specifically, and structured support for the people who are finding it hard.

- Psychological safety before the tools go live. Employees who feel safe to make mistakes, ask questions, and voice scepticism engage with AI differently than those who feel monitored for compliance. This is one of the five drivers in the Organizational Adoption Profile, and the one that determines whether the other four can activate. See my earlier article on psychological safety and AI adoption for the research on why this comes first.

- Clear personal value, not just business value. Unilever’s voluntary rollout of its internal AI assistant Unabot found that 36% of employees tried it voluntarily and 80% of those users continued, a retention rate that justified expansion to 190 markets globally. The question that drove that result wasn’t “how does this help the business?” It was “how does this make your specific job easier?” When employees can see that answer clearly, motivation becomes intrinsic.

- Pilots before full rollout. Voluntary early adopter cohorts generate social proof and give organisations real data about what’s working before they scale. That’s a fundamentally different starting point than a company-wide mandate on day one.

A Different Model: Voluntary, Validated, Scaled

Across the organisations doing this well, the structure is consistent.

- Voluntary pilots with structured support. Deploy to willing early adopters with genuine change management. Measure quality of use and psychological safety, not just usage rates.

- Validated and socialised. Capture evidence and peer stories from the pilot cohort. Social proof does the work that mandates alone can’t.

- Scaled with a floor, not a ceiling. Where compliance requirements exist, introduce them as the minimum. Build voluntary pathways to deeper capability above that floor.

This produces genuine AI literacy. The kind that generates business outcomes, not completion certificates.

What Leaders Need to Ask

If you’re overseeing an AI rollout right now, three questions are worth sitting with honestly.

- Are your adoption metrics measuring usage, or quality of use? What do they actually tell you?

- What change management is genuinely accompanying your mandate? Not a communication plan. Not a training module. Real, structured support for people navigating the uncertainty of what this means for their work.

- What is your organisation’s Emotional Carrying Capacity right now? AI adoption doesn’t land in isolation. Have you assessed whether this is the right time and the right approach for a mandatory rollout?

The organisations that will lead with AI in the next three years aren’t the ones that issued the widest mandates the fastest. They’re the ones that treated the human side of the transformation as the actual investment. BCG put it plainly: when companies undertake AI transformations, they need to focus two-thirds of their effort on people-related capabilities. The other third on technology and algorithms.

Most organisations have that backwards. The ones getting it right don’t.

AI Adoption Readiness DiagnosticYour Organisational Adoption Profile, including Psychological Safety, Adaptability Mindset, and Adoption Capacity, determines whether your AI rollout creates real change or a very convincing-looking dashboard. -> Get Your Profile

Frequently Asked Questions About AI and Psychological Safety

Checkbox Adoption occurs when mandatory AI rollouts produce surface-level compliance without genuine behaviour change. Employees complete the training. Usage is logged. But the tools aren’t meaningfully integrated into how work actually gets done. The adoption numbers look healthy while the business impact remains flat.

Yes. Where regulatory requirements exist, such as the EU AI Act’s mandate for AI competency among employees working with AI systems, a baseline compliance requirement is appropriate. The issue isn’t the mandate. It’s what gets skipped alongside it. A mandate that isn’t accompanied by proper change management, employee involvement, and psychological safety tends to produce Checkbox Adoption, not capability.

High performers typically have a strong professional identity tied to their expertise and judgement. Mandatory AI adoption without adequate support can trigger Identity Drift, the uncomfortable sense that a technology is being positioned as superior to skills they’ve spent years developing. Without psychological safety to voice that discomfort, high performers tend to comply superficially or disengage from the change programme altogether.

The Technology Acceptance Model tells us that perceived usefulness and ease of use drive adoption. But in mandatory settings the research shows those relationships shift significantly. Workload pressure, anxiety, and perceived control become far more important predictors. You can’t apply voluntary-adoption findings to a mandatory rollout and expect the same results.

Look beyond completion metrics. Are employees integrating AI into discretionary as well as required tasks? Do they describe the tools as genuinely useful? What do skip-level conversations reveal about how middle managers actually feel about what they’re nominally championing? Is there organic sharing of AI use cases, or does it feel like a topic people avoid? A meaningful assessment goes beyond usage logs to measure psychological readiness, intrinsic motivation, and psychological safety around AI.

Start with honest diagnosis. Most organisations with stalled rollouts have been measuring the wrong things: tracking training completions and usage rates rather than the conditions that determine whether adoption is genuine. A capability gap requires a different intervention than a compliance gap. Assessing your organisation’s readiness profile across the five drivers of genuine change will tell you where the real lever is.

Dr. Xenia Wade specializes in Human-Centered AI Change, helping organizations build the emotional and cultural readiness their people need to actually adopt AI. With a PhD in Human Resource Management and experience across enterprise-scale organizational transformations, she focuses on the human side of AI at work, the fears, the identity shifts, and the invisible barriers that no productivity dashboard can capture.

Follow Dr. Xenia Wade on LinkedIn.

Want to know how psychological safe your organization is? Take the free diagnostic →

Related concepts: Identity Drift | Silent Resistance | AI Shame | Emotional Carrying Capacity | Checkbox Adoption

Sources

- Boston Consulting Group. (October 2024). Where’s the Value in AI? Survey of 1,000 CxOs and senior executives across 59 countries and 20 sectors. bcg.com

- Cieslak, V. & Valor, C. (2024). “Moving beyond conventional resistance and resistors: an integrative review of employee resistance to digital transformation.” Cogent Business and Management.

- Huang, S-H. & Hsu, W-K. (2010). “The acceptance of workplace users for a new IT with mandatory use.” Asia Pacific Management Review.

- Li, J., Zhu, F., & Hua, P. (2025). “Overcoming the Organizational Barriers to AI Adoption.” Harvard Business Review.