By Dr. Xenia Wade | The Human Side of AI at Work

Here’s something nobody talks about in your AI strategy meetings.

Almost half of your employees are using AI at work and they’re hiding it from you. Not because they’re doing anything wrong. But because they’re afraid of what using AI says about them.

This is AI Shame: the fear of being judged as less competent when technology exposes gaps in knowledge. It’s one of the biggest invisible barriers to AI adoption that most organizations don’t even know exists. And until you address it, no amount of training, tooling, or executive enthusiasm will close your adoption gap.

The Numbers Behind Hidden AI Use at Work

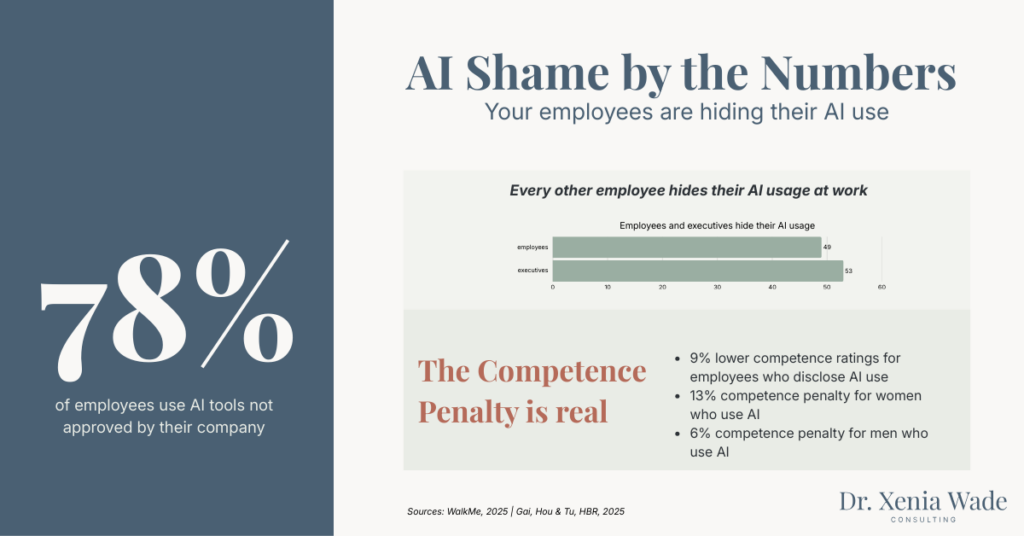

According to WalkMe’s 2025 AI in the Workplace Survey of 1,000 U.S. workers, 49% of employees have concealed their AI use at work to avoid judgment. But here’s the part that should stop every CHRO in their tracks: 53% of C-suite leaders are hiding it too.

Let that sink in. The people rolling out the AI strategy are secretly ashamed of using the very tools they’re telling everyone else to adopt.

Everyone’s using AI. Almost nobody’s talking about it.

And it’s happening alongside another alarming stat: 78% of employees are using AI tools not provided or approved by their employer. When people feel too ashamed to use the official tools openly, they turn to shadow AI, creating security risks, compliance gaps, and governance blind spots that your dashboards can’t see.

What AI Shame Actually Looks Like Inside Your Organization

I’ve worked across many organizational transformations, and the pattern is always the same.

People don’t resist the tools because they don’t understand it. They resist because using it triggers a deeply human fear: looking like they can’t do their job anymore.

Every time an employee opens an AI tool, there’s a silent internal negotiation happening. They question whether they’re doing it right. They wonder if they’re becoming obsolete. They feel guilty for not being better at this already. And they’re too embarrassed to ask for help.

This plays out in three specific fears, what I call the triple threat of AI Shame:

Fear of being labeled lazy. “If I use AI for this report, will my manager think I couldn’t be bothered to do it myself?”

Fear of appearing incompetent. “If I can’t figure out how to write a decent prompt, what does that say about me?”

Fear of becoming obsolete. “If AI can do 80% of my job, do they still need me?”

None of these fears are about the technology. They’re about identity, about what using AI says about who we are professionally.

What AI Shame Actually Looks Like Inside Your Organization

I’ve worked across many organizational transformations, and the pattern is always the same.

People don’t resist the tools because they don’t understand it. They resist because using it triggers a deeply human fear: looking like they can’t do their job anymore.

Every time an employee opens an AI tool, there’s a silent internal negotiation happening. They question whether they’re doing it right. They wonder if they’re becoming obsolete. They feel guilty for not being better at this already. And they’re too embarrassed to ask for help.

This plays out in three specific fears, what I call the triple threat of AI Shame:

Fear of being labeled lazy. “If I use AI for this report, will my manager think I couldn’t be bothered to do it myself?”

Fear of appearing incompetent. “If I can’t figure out how to write a decent prompt, what does that say about me?”

Fear of becoming obsolete. “If AI can do 80% of my job, do they still need me?”

None of these fears are about the technology. They’re about identity, about what using AI says about who we are professionally.

The AI Competence Penalty Is Real And Measurable

If you think AI Shame is just a feelings problem, here’s where it gets measurable.

A landmark study published in Harvard Business Review in August 2025, led by researchers at Peking University and Hong Kong Polytechnic University, studied 28,698 software engineers at a major technology company. In a randomized experiment, 1,026 engineers reviewed identical Python code and the only difference was whether the engineer was described as having used AI assistance.

The findings should stop every leader in their tracks. Engineers described as using AI received 9% lower competence ratings for identical work. Same quality. Same output. The only thing that changed was the label.

And the penalty wasn’t distributed equally. Female engineers saw a 13% competence drop compared to 6% for men. The people who judged hardest? Senior male engineers who hadn’t adopted AI themselves, – they penalized female AI users up to 26% more than their male counterparts.

A separate study published in the Proceedings of the National Academy of Sciences (PNAS) in May 2025, conducted by Duke University researchers across four experiments with 4,439 participants, confirmed the pattern. People who use AI are perceived as lazier, less competent, and less diligent than those who receive help from non-AI sources even when the outcomes are identical. And critically, managers who don’t use AI themselves were significantly less likely to hire candidates who do.

The Peking University study also found that anticipated competence penalty was the single strongest predictor of AI refusal and stronger than lack of training, age, or access to tools.

Read that again. The number one reason people don’t adopt AI isn’t a skills gap. It’s fear of judgment.

What Organizations Measure vs. What Actually Matters

Here’s where most AI adoption strategies fall apart.

Organizations track tool activation rates. They measure time saved. They count prompts used. And they report all of this up the chain with confidence.

But they’re not measuring how many people feel incompetent on a daily basis. They’re not tracking the emotional energy it takes to keep learning something that makes you feel like an intern again. And they’re definitely not counting how many employees are quietly opting out because it feels safer than failing publicly.

AI Shame is invisible on every productivity dashboard. But it’s quietly killing your adoption numbers.

I see this in every engagement. A company invests millions in new tools. Leadership is excited. Training gets rolled out. And six months later, actual usage is sitting at 15 to 20 percent while everyone wonders what went wrong.

What went wrong is that nobody addressed the emotional layer. Organizations jump straight to AI bootcamps and prompt engineering workshops, teaching people how to use the tools but never acknowledging the identity shift required to trust tools that feel smarter than you.

AI Shame Thrives Where Blame Cultures Live

AI Shame is what happens in a blame culture.

In a learning organization, using AI isn’t a confession. It’s just how the work gets done. People share the prompt, walk through their checks, show the result. It’s normalized and taught. The guardrails are clear.

But in a blame culture, AI use becomes something to hide. People use it quietly, bank the productivity gains, and never tell anyone because the alternative is being judged.

And there’s a perverse incentive making this worse. Many employees reported that their productivity gains through AI didn’t lead to more creative time or professional development. They led to higher expectations, more work, and more pressure. So people hide AI use as a survival strategy against burnout and organizations lose the chance to redistribute work more intelligently or learn from what’s actually working.

Without clear guidelines, people operate in a grey zone. And that grey zone feeds the shame spiral. Clear boundaries paradoxically create more psychological safety to experiment.

The Real Cost of AI Shame to Your Organization

When people hide their AI use, the consequences go far beyond hurt feelings.

Shadow AI creates security risks. Employees using unapproved tools on personal devices because they’re afraid to use the official ones openly, means your data is flowing through channels you can’t see or control. WalkMe found 78% of employees are already doing this.

Knowledge sharing stops. When someone figures out an AI workflow that saves three hours a week, they keep it to themselves. Best practices stay hidden. Teams reinvent the wheel alone.

Your AI investment sits unused. That multimillion-dollar Copilot rollout? The adoption numbers leadership tracks are a vanity metric. Real usage, the kind that transforms how work gets done, requires people to feel safe enough to experiment openly.

You lose your best people first. High performers who feel the competence penalty most acutely are the ones most likely to quietly disengage. They don’t push back. They don’t complain. They just stop using the tools and revert to what’s familiar.

How to Break the AI Shame Cycle

The solution to AI Shame isn’t more training. You can’t teach someone out of an emotional barrier. But you can create the conditions where the barrier dissolves.

Leaders Must Go First, Imperfectly

When executives and managers openly share how they use AI, including the messy experiments and the failed prompts, it gives everyone else permission to be imperfect.

If a VP says “I tried to get Claude to analyze this data and it took me four attempts to get something useful,” that single moment does more for adoption than any training deck. Transparency starts at the top. You can’t ask your people to be vulnerable with AI if leadership is hiding their own use.

Name the Emotional Experience Out Loud

Most organizations never acknowledge that learning AI is emotionally exhausting. Just saying out loud, “It’s normal to feel overwhelmed. It’s normal to feel like an intern again. That doesn’t mean you’re bad at your job” changes everything.

When a leader names the fear, the room exhales. People start asking the questions they’ve been holding back for months.

Reframe AI as a Professional Skill, Not a Shortcut

AI isn’t cheating. It’s a professional tool. But as long as the narrative frames it as a shortcut or worse, as something only people who “can’t do real work” use, adoption will stall.

The reframe that works: using AI well is a skill worth developing. AI handles the repetitive work so people can focus on the thinking, the judgment, the creativity that only humans bring. That framing changes how people relate to the tool entirely.

Create Peer Learning, Not Only Formal Training

Formal training feels evaluative. Peer learning feels safe. Let early adopters share their messy experiments and not polished presentations, but real “here’s what I tried, here’s where it went wrong, here’s what actually worked.”

Peer modeling builds psychological safety faster than any training program. When someone at your level, in your role, says “I didn’t know what I was doing either,” it normalizes the learning curve.

Evaluate Work Quality, Not Tool Choice

If your performance reviews penalize people for using AI, even implicitly, you’ve built a system that punishes adoption. Judge people on the quality of what they deliver, not how they got there.

This is especially critical for women and older workers, who the research shows face significantly harsher competence penalties for AI use. Your evaluation systems need to actively counteract this bias, not reinforce it.

This Isn’t a Tech Problem. It’s a Human One.

I’ve spent my career studying how people experience organizational change, through a PhD in Human Resource Management, through supporting digital transformations at one of the leading Tech Consulting companies, through the messy reality of helping real teams navigate real disruption.

And here’s what I keep coming back to: tech adoption doesn’t fail because of the technology. It fails because organizations treat adoption as a training problem when it’s actually a readiness problem.

The gap between “can use it” and “actually uses it” is almost always emotional. Not technical.

This is exactly what my Organizational Adoption Profile measures, the emotional friction that kills AI adoption before it starts. It assesses five readiness drivers that shape how people respond to change: Adaptability Mindset, Psychological Safety, Empowerment Orientation, Action Style, and Adoption Capacity.

Because you can’t build sustainable AI adoption if you don’t design for the emotional experience first.

Start Here: Measure What Actually Matters

If you’re reading this and recognizing your own organization, the stalled adoption, the hidden AI use, the gap between your investment and your actual results, you’re not alone. And the fix isn’t another round of training.

The fix is understanding where your people actually are emotionally, and building from there.

→ Take the free AI Adoption Readiness Diagnostic to find out where your organization really stands. Not where your dashboards say you are. Where your people actually are.

Because sustainable AI adoption isn’t about better tools or clearer workflows. It’s about building psychological safety so people feel safe enough to be bad at something new.

And that starts with naming the thing nobody’s talking about. It starts with addressing AI Shame.

Frequently Asked Questions About AI Shame

AI Shame is the fear of being judged as less competent when using artificial intelligence tools at work. It manifests as employees hiding their AI use, avoiding official AI tools, or refusing to adopt AI altogether, not because of technical barriers, but because of the social and professional risks they perceive.

According to WalkMe’s 2025 AI in the Workplace Survey, 49% of employees have concealed their AI use to avoid judgment. Among C-suite executives, 53% admit to hiding their AI use. Gen Z workers are hit hardest, with 62% concealing AI use altogether.

The competence penalty is a documented bias where people who use AI receive lower evaluations of their competence, even when their work quality is identical to non-AI users. Research found AI users received 9% lower competence ratings, with women facing a 13% penalty compared to 6% for men.

Most AI adoption strategies focus exclusively on technical training and tool access while ignoring the emotional and cultural barriers, like AI Shame, fear of judgment, and identity threat, that actually determine whether employees use the tools. Organizations treat adoption as a training problem when it’s a readiness problem.

Key strategies include: having leaders model imperfect AI use openly, naming the emotional experience of AI adoption, reframing AI as a professional skill rather than a shortcut, creating peer learning environments instead of formal evaluations, and redesigning performance evaluations to assess work quality rather than tool choice.

Dr. Xenia Wade specializes in Human-Centered AI Change, helping organizations build the emotional and cultural readiness their people need to actually adopt AI. With a PhD in Human Resource Management and experience across enterprise-scale organizational transformations, she focuses on the human side of AI at work, the fears, the identity shifts, and the invisible barriers that no productivity dashboard can capture.

Want to know if AI Shame is silently stalling your transformation? Take the free diagnostic →

Related concepts: Identity Drift | Silent Resistance | Emotional Carrying Capacity | Human Overload

Sources

- WalkMe. “AI in the Workplace Survey.” August 2025. Survey of 1,000 U.S. working adults who use AI in their jobs. Source

- Gai, P.J., Hou, J., & Tu, Y. “Competence Penalty Is a Barrier to the Adoption of New Technology.” Published in Harvard Business Review, August 2025. Study of 28,698 software engineers with randomized experiment of 1,026 engineers. HBR Article

- Reif, J.A., Larrick, R.P., & Soll, J.B. “Evidence of a social evaluation penalty for using AI.” Proceedings of the National Academy of Sciences, 122(19), May 2025. Four preregistered experiments with 4,439 participants. PNAS

- WalkMe. “The State of Digital Adoption 2025.” Survey of 3,700 senior executives and employees. Source