By Dr. Xenia Wade | The Human Side of AI at Work

Everyone’s talking about the fear of AI replacing jobs. That’s real. But job loss is only part of what your people are carrying.

Ninety-two million positions displaced globally by 2030. Nearly 55,000 U.S. workers told AI took their jobs in a single year. Six to seven percent of the American workforce facing displacement under baseline scenarios. The numbers are real, and they’re accelerating. But the fear your people feel when they read those headlines? That fear isn’t only about unemployment statistics. It’s about something much harder to fix.

It’s about identity. About whether the skills they spent twenty years building still matter. About whether they still matter.

And until your AI adoption strategy accounts for that, you’re solving the wrong problem.

How Many Jobs Will AI Replace?

The World Economic Forum’s (WEF) Future of Jobs Report 2025, drawing on data from over 1,000 companies across 55 economies, projects that 92 million jobs will be displaced globally by 2030, equivalent to 8% of current employment. In the same timeframe, 170 million new roles will be created, a net gain of 78 million positions. That net positive gets cited a lot in executive presentations. What gets cited less: 39% of existing workplace skills will be disrupted or outdated within five years. The churn is enormous, even if the totals look balanced on a slide (World Economic Forum, 2025).

Goldman Sachs Research narrows the lens to the U.S. Their analysis of over 800 occupations estimates that 6 to 7% of the American workforce faces displacement under baseline AI adoption, with the range stretching from 3% to 14% depending on how aggressively companies deploy the technology. Unemployment may rise by roughly half a percentage point during the transition, though Goldman’s economists expect the impact to be temporary as new opportunities emerge. The jobs most at risk: computer programmers, accountants, legal assistants, customer service representatives. White-collar roles that people spent years training to fill (Briggs & Dong, 2025).

And this isn’t just projection. According to outplacement firm Challenger, Gray & Christmas, U.S. employers cited AI as the direct reason for nearly 55,000 job cuts in 2025, a sharp escalation from roughly 4,600 when the firm first started tracking the category in 2023. Total layoffs for the year exceeded 1.2 million, the highest annual figure since the pandemic. Companies like Amazon, Microsoft, and Salesforce openly attributed significant reductions to AI efficiency gains (Challenger, Gray & Christmas, 2026).

Is your organization actually ready for AI, or just pretending to be? The AI Adoption Readiness Diagnostic measures what your dashboards can’t: the emotional and cultural barriers that are silently stalling your transformation.→ Take the free diagnostic now

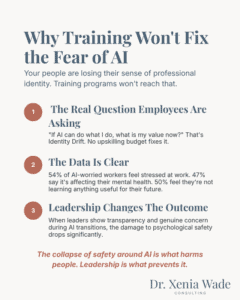

Why Training Won’t Fix the Fear of AI

Here’s where most leaders get it wrong. They hear “people are afraid of AI” and they jump to solutions: training programs, upskilling budgets, prompt engineering workshops. Skills development matters; the WEF report names it as employers’ top workforce strategy through 2030. But it misses the deeper issue entirely.

The fear of AI replacing jobs runs deeper than a skills gap. It’s an identity crisis.

I recently spoke with a senior leader at a banking organization of more than 40,000 people. He told me something that has stayed with me: that their leaders, not the frontline, the leaders, were the ones most afraid. Afraid that their expertise, their judgment, their years of hard-won knowledge no longer matter. That the thing that made them them at work is being rendered worthless.

This isn’t a one-off. It’s the pattern I see everywhere.

I call this Identity Drift; the disorienting experience of watching your professional identity loosen as the capabilities you built your career on become automatable. Grounded in Role Identity Theory, it reflects the fact that people don’t just perform tasks, they build their sense of self around the expertise those tasks require. When AI begins to replicate that expertise, the threat becomes existential: if AI can do what I do, what is my value now? This makes AI adoption fundamentally different from past technology shifts, and it’s happening everywhere, often silently.

Research backs this up. A 2025 study found a the link: AI adoption erodes psychological safety, and eroded safety increases depression. But the study also found that leadership changes the equation. When leaders were transparent about what was happening and showed genuine concern for their people during AI transitions, the negative effects on safety dropped significantly. Same AI rollout. Different leadership approach. Entirely different outcome for people (Kim, Kim & Lee, 2025).

This is the same finding I explored in my previous blog post on psychological safety as the secret engine of AI adoption: it’s not the technology that harms people. It’s the collapse of safety around it.

The American Psychological Association’s 2024 Work in America survey of more than 2,000 employed U.S. adults fills in the picture further. Workers who worried about AI taking over their job duties were significantly more likely to feel tense or stressed during the workday (54% vs. 36%), to say their work environment negatively affects their mental health (47% vs. 28%), and to report they’re not learning new things that will help them in the future (50% vs. 31%). The survey also found that workplaces with a culture of psychological safety produced more confident and resilient employees, even amid the same AI pressures (American Psychological Association, 2024).

How Fear Becomes Silent Resistance and AI Shame

When psychological safety collapses, the consequences go far beyond individual distress. Fear shows up as what I call Silent Resistance; the phenomenon where employees disengage from AI adoption not through overt pushback, but through quiet avoidance. They nod along in town halls. They complete the mandatory training. Then they go right back to doing things the old way, because engaging with the new tools feels like speeding up their own obsolescence.

Underneath that resistance often sits something even more corrosive: AI Shame. The shame of not understanding a technology everyone around you seems to have mastered. And the shame of admitting you used it, because using AI signals to others that your work is less yours, less skilled, less earned. Either way, people hide.

As I wrote in my earlier piece on AI Shame, 49% of employees are already concealing their AI use at work, and the hiding runs straight to the C-suite, where 53% of leaders do the same. They’re not resisting the technology. They’re hiding from the judgment that comes with using it.

The APA data confirms the retention risk: workers worried about AI were nearly twice as likely to say they plan to look for a new job within the year compared to those who aren’t worried. That’s not a training gap. That’s a retention crisis hiding in plain sight.

This is the silent resistance loop I described in my article on psychological safety and AI adoption: anxiety drives hiding, hiding prevents shared learning, lack of learning reinforces anxiety, and the cycle accelerates. From the outside, it looks like a training problem. From the inside, it’s an Emotional Carrying Capacity crisis and no upskilling program will fix it if you don’t address the fear underneath first.

Where is Silent Resistance hiding in your organization? The AI Adoption Readiness Diagnostic measures five readiness drivers, including psychological safety, so you can see what’s really blocking adoption. → Take the free diagnostic now

How to Address AI Obsolescence Fear at Work

If your AI adoption strategy starts and ends with technical training, you’re solving the wrong problem. Here’s what actually moves the needle.

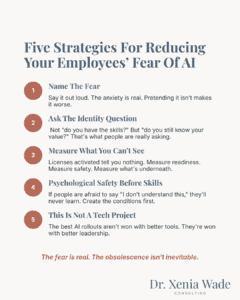

Name the fear out loud

The most powerful thing a leader can do is acknowledge that the fear of becoming obsolete is rational. Not dismiss it. Not rush past it with reassurance. Name it. When 92 million jobs are projected to be displaced and nearly 55,000 U.S. workers lost jobs to AI in a single year, people aren’t being dramatic. They’re reading the room. When leaders pretend the anxiety doesn’t exist, it doesn’t go away. It goes underground and turns into Silent Resistance.

Separate the identity question from the skills question

Before you launch another training initiative, help people answer a more fundamental question: Who am I in this new world? The data analyst whose value was in meticulous spreadsheet work needs help understanding that their real value was always in the judgment behind the numbers and that AI makes that judgment more valuable, not less. That reframe isn’t a communications task. It’s a change management task. It requires deliberate attention to Identity Drift before adoption can stick.

Measure readiness, not just adoption

Most organizations track how many licenses got activated. But Checkbox Adoption, people going through the motions of AI use without genuine engagement, is almost always a symptom of unresolved readiness barriers, not a training gap. Psychological Safety is one of five drivers in the Organizational Adoption Profile, alongside Adaptability Mindset, Empowerment Orientation, Action Style, and Adoption Capacity, and it functions as the foundation. Without safety, the other four cannot activate. Measure what your dashboards cannot see: the emotional and cultural state of your workforce, where resistance is concentrated, and what is actually driving it.

Build psychological safety before you build AI competency

Workplaces with strong psychological safety produced more confident employees even amid AI uncertainty. The Kim, Kim, and Lee (2025) research shows the mechanism directly: when AI adoption outpaces psychological safety, depression can follow. Teams that feel safe enough to say “I don’t understand this” are the teams that actually learn. Teams where admitting confusion carries career risk are the teams where AI Shame festers and adoption quietly stalls.

Stop treating AI adoption as a technology project

AI adoption is a human transformation that happens to involve technology. The organizations getting this right aren’t the ones with the best tools. They’re the ones that treated the emotional, psychological, and cultural dimensions of change with the same rigor they applied to the technical rollout.

Will AI Make Me Obsolete? The Real Question

Yes, AI will displace roles. The projections from the WEF, Goldman Sachs, and the layoff data tracked by Challenger are clear and accelerating.

But the question that actually determines outcomes isn’t whether AI will change work. It’s whether your organization is psychologically ready to move through that change without losing the people, the trust, and the institutional knowledge that no algorithm can replicate.

The fear of becoming obsolete because of AI is real. But obsolescence isn’t inevitable. It’s a failure of organizational readiness.

And readiness starts with understanding what your people are actually afraid of.

Not where your dashboards say you are. Where your people actually are. The AI Adoption Readiness Diagnostic measures what productivity metrics miss: the psychological, cultural, and emotional readiness that determines whether your AI investment pays off or quietly dies. → Take the free AI Adoption Readiness Diagnostic

Frequently Asked Questions About AI and Psychological Safety

The projections vary significantly depending on the source and methodology. The World Economic Forum’s Future of Jobs Report 2025 projects 92 million jobs displaced globally by 2030, with 170 million new roles created for a net gain of 78 million. Goldman Sachs Research estimates 6 to 7% of the U.S. workforce faces displacement under baseline AI adoption, concentrated in white-collar and administrative roles.

Job loss is the surface fear. Underneath it sits something harder to fix: professional identity threat, shame around not understanding the technology, and fear that years of expertise are becoming worthless. A 2025 peer-reviewed study found that AI adoption directly erodes psychological safety, which in turn increases depression.

Identity Drift is the disorienting experience of watching your professional identity erode as the capabilities you built your career on become automatable. Social Identity Theory tells us that people derive a core part of their self-concept from their professional role. When AI begins performing that role, it does not just change the work. It threatens who someone understands themselves to be. The HR director who wonders if 20 years of expertise still counts. The accountant who sees AI doing in seconds what used to take them hours. Identity Drift is one of the biggest invisible barriers to AI adoption because it is not a skills gap. It is an existential question about professional worth.

AI Shame is the discomfort professionals feel around AI that pulls in two directions at once. The shame of not understanding a technology everyone around you seems to have mastered. And the shame of admitting you used it, because using AI signals to others that your work is less yours, less skilled, less earned. Research shows 49% of employees conceal their AI use at work; among C-suite leaders, 53% do the same. It drives avoidance and silent disengagement from adoption initiatives, often among an organization’s most experienced people.

Name the fear openly. Distinguish the identity question from the skills question; they require different interventions. Measure psychological readiness, not just tool activation rates. Build safety so people can admit confusion without career risk. And treat AI adoption as a human transformation, not a technology rollout.

The Organizational Adoption Profile (OAP) is a readiness framework measuring five drivers that determine how people respond to AI adoption: Psychological Safety, Adaptability Mindset, Empowerment Orientation, Action Style, and Adoption Capacity. The free AI Adoption Readiness Diagnostic is built on the OAP and helps CHROs identify where adoption barriers are concentrated.

Dr. Xenia Wade specializes in Human-Centered AI Change, helping organizations build the emotional and cultural readiness their people need to actually adopt AI. With a PhD in Human Resource Management and experience across enterprise-scale organizational transformations, she focuses on the human side of AI at work, the fears, the identity shifts, and the invisible barriers that no productivity dashboard can capture.

Follow Dr. Xenia Wade on LinkedIn.

Want to know how psychological safe your organization is? Take the free diagnostic →

Related concepts: Identity Drift | Silent Resistance | AI Shame | Emotional Carrying Capacity | Checkbox Adoption

Sources

World Economic Forum. (2025). The Future of Jobs Report 2025. Fifth edition. Data from 1,000+ companies, 22 industry clusters, 55 economies.

Briggs, J. & Dong, S. (2025). Quantifying the Risks of AI-Related Job Displacement. Goldman Sachs Research.

Challenger, Gray & Christmas. (2026). 2025 Year-End Job Cut Report. Published January 8, 2026.

Kim, B.J., Kim, M.J. & Lee, J. (2025). The dark side of artificial intelligence adoption: Linking AI adoption to employee depression via psychological safety and ethical leadership. Humanities and Social Sciences Communications, 12, Article 704.

American Psychological Association. (2024). 2024 Work in America Survey: Psychological Safety in the Changing Workplace.