By Dr. Xenia Wade | The Human Side of AI at Work

A CEO opens ChatGPT, pastes in a strategy question, gets a polished answer, and goes with it. No pushback. No gut check. No second opinion from the team.

On the other end, another leader refuses to touch AI altogether. Delegates all of it to IT. Treats it like a project management tool, not a leadership tool.

Both are making the same mistake about how leaders can use AI. AI leadership is less about which tools you use and more about how you use them without losing your judgment in the process. And they’re treating AI as either a replacement for their judgment or something completely separate from it. The research is clear: neither works.

The Data Behind AI-Augmented Leadership

Bevilacqua, Masárová, Perotti, and Ferraris recently published a systematic review in Review of Managerial Science that examined 63 articles from 31 top-ranked academic journals on how AI is reshaping leadership. Their findings are telling. AI transforms leadership through three channels: the skills leaders need, the factors that drive adoption decisions, and how AI gets embedded in strategic decision-making.

All three require active leadership involvement. Not passive tool adoption.

The pattern across the studies Bevilacqua and colleagues reviewed is consistent. AI speeds up decisions, improves accuracy, and sharpens forecasting. But those gains are only sustainable when leaders combine AI’s analytical power with their own judgment and ethical governance. The technology alone doesn’t move the needle. What matters is how the leader works with it.

The Trap Nobody Warns You About

Klingbeil and colleagues published an experimental study in Computers in Human Behavior that should make every leader pause. Their research shows that people overrely on AI recommendations even when they’re explicitly told the AI’s accuracy level and see conflicting evidence. Even when it costs them performance.

The psychological pattern is fascinating. Logg and colleagues (2019) show that when we know advice comes from an algorithm, we tend to trust it more than human judgment even when we have no evidence the algorithm is superior. Klingbeil’s team found that this over-reliance doesn’t just produce bad outcomes for the person following the advice. It creates negative effects for third parties, too.

For leaders, this creates a specific kind of vulnerability. The more sophisticated the AI tool, the easier it is to outsource your judgment to it. And once you start doing that, your teams notice. Your decision-making muscle atrophies. Your strategic instincts get quieter.

Mistakes with AI are inevitable. The question is whether leaders have built the capability to catch those mistakes before they cause damage. And most haven’t.

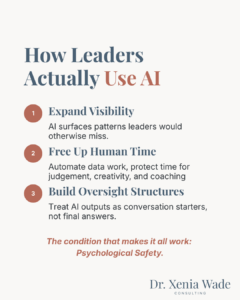

What the Best Leaders Actually Do with AI

The research points to three areas where AI genuinely amplifies leadership, and one condition that makes it all work.

They use AI to see what they’d otherwise miss.

The strongest use case for AI in leadership isn’t generating strategy. It’s expanding what leaders can see. Predictive analytics that spot emerging market trends. Scenario planning tools that model how decisions might play out across different conditions. Sentiment analysis that surfaces team dynamics leaders can’t observe in meetings.

The value isn’t in AI making the decisions. It’s in AI surfacing patterns that help leaders make better ones.

They use AI to free up time for the work only humans can do.

McKinsey’s 2026 article shows AI can’t replace core leadership work: setting organizational aspirations, exercising nuanced judgment, and applying human creativity. These aren’t “soft skills”, they’re what separates great leaders from AI tools.

When leaders use AI to handle data synthesis, meeting preparation, and reporting, they create space for the work that actually moves organizations forward. Coaching a struggling team member. Navigating a conflict between departments. Making the hard call that no algorithm can make for you.

The research consistently frames AI as augmentation, not automation of leadership. Hossain, Fernando, and Akter’s review in the Journal of Management & Organization makes this concrete. They analyzed 37 papers from top-tier journals including Academy of Management Review, MIT Sloan Management Review, and Harvard Business Review, and identified three capabilities leaders need in the AI era: technical capability to understand AI outputs, adaptive capability to navigate disruption, and transformational capability to align AI with organizational values. Leaders who get this right treat AI like a very fast, very thorough analyst on their team. Not the decision-maker. The analyst.

They build oversight structures around AI outputs.

This is the part most organizations skip entirely. Leaders need structured checkpoints, human review processes, and the ability to override AI recommendations when context demands it.

That means asking specific questions. What data was this recommendation based on? What didn’t the model consider? What would a person with deep domain expertise say about this? Where does this conflict with what I’m hearing from the front line?

Leaders who treat AI outputs as conversation starters rather than final answers consistently produce better outcomes. It’s the difference between delegation and abdication. Aniebonam’s research on leadership in the AI era takes this further, highlighting examples of how AI can help leaders develop these oversight skills, including machine-learning-based feedback systems and VR leadership simulations that train leaders to spot when AI gets it wrong, before the stakes get high.

Want to know how your organization measures up? The AI Adoption Readiness Scan is a free, 5-minute diagnostic built on the Organizational Adoption Profile, measuring your organization’s human readiness for AI adoption across the five drivers that determine whether AI accelerates or stalls your transformation.

The Condition That Makes Everything Work

Here’s what connects all three of those practices. And it’s the part I care about most deeply, because it’s what I see failing in organization after organization.

Psychological safety. It’s one of the five drivers I measure in the Organizational Adoption Profile and it’s the one that determines whether the other four can do their job.

Bevilacqua et al. found that AI’s leadership impact depends on top managers developing emotional intelligence and fostering collaborative environments. Without these, the technology’s potential remains unrealized which, in practice, shows up as psychological safety failures.

Think about what it takes for a mid-level manager to say, “I think the AI got this wrong.” They’re not just questioning a tool. They’re questioning a decision their leader already endorsed. That requires enormous psychological safety. And most teams don’t have it.

Leaders who use AI well don’t just adopt the technology. They create environments where people feel safe saying, “Wait. Let’s look at this differently.” Where challenging an AI recommendation is treated as valuable critical thinking, not insubordination.

Without that, AI becomes a tool for faster bad decisions dressed up in impressive data.

What This Means for Your Organization

If you’re a CHRO or People & Culture leader reading this, the takeaway isn’t to buy more AI tools. It’s to ask a different set of questions.

Do your leaders know how to critically evaluate AI outputs? Or are they accepting recommendations without interrogation?

Have you built review structures that balance AI efficiency with human oversight? Or does the AI recommendation become the decision by default?

Is your leadership culture safe enough for people to challenge AI-informed decisions? Or does questioning the data feel like questioning the leader?

These aren’t technology questions. They’re organizational readiness questions. And they determine whether AI makes your leaders better or just faster at being wrong.

The Bottom Line

AI doesn’t make leaders. Leaders who understand their own judgment, who build cultures of psychological safety, and who know when to override the algorithm, those are the ones who use AI to its full potential.

The research is unambiguous. AI improves leadership outcomes when it’s embedded in a human-centered approach. The technology is only as good as the organizational culture surrounding it.

Your AI strategy is a leadership development strategy. And the sooner your organization treats it that way, the better your results will be.

Want to find out if your organization is ready?

Take the AI Adoption Readiness Scan : a free, 5-minute diagnostic built on the Organizational Adoption Profile framework, assessing your organization’s human readiness for AI adoption across the five drivers that matter most. You’ll receive a personalized report with your scores, so you can see exactly where psychological safety and two other readiness drivers are accelerating or stalling your transformation.

Dr. Xenia Wade is an independent consultant specializing in the Human Side of AI at Work. With a PhD in Human Resource Management, 7+ years as an Accenture Consultant, and experience across enterprise-scale transformations, she helps CHROs and People & Culture leaders build the human infrastructure that makes AI adoption actually work.

Sources

- Bevilacqua, S., Masárová, J., Perotti, F.A., & Ferraris, A. (2025). Enhancing top managers’ leadership with artificial intelligence: insights from a systematic literature review. Review of Managerial Science, 1–37.

- Klingbeil, A., Grützner, C., & Schreck, P. (2024). Trust and reliance on AI — An experimental study on the extent and costs of overreliance on AI. Computers in Human Behavior, 160, 108352.

- Logg, J. M., Minson, J. A., & Moore, D. A. (2019). Algorithm appreciation: People prefer algorithmic to human judgment. Organizational Behavior and Human Decision Processes, 151, 90-103.

- McKinsey & Company (2026). Building leaders in the age of AI.

- Hossain, S., Fernando, M., & Akter, S. (2025). The influence of artificial intelligence-driven capabilities on responsible leadership: A future research agenda. Journal of Management & Organization, 31(2).

- Aniebonam, E.E. (2025). The Future of Leadership in the Context of Artificial Intelligence and Automation: Navigating Ethical and Operational Challenges. British Journal of Business and Psychology Research, 1(1), 52–62.