By Dr. Xenia Wade | The Human Side of AI at Work

Your team rolled out a new AI tool last quarter. Adoption looks strong on the dashboard. But in the hallways, something shifted. People stopped asking questions in meetings. Fewer hands go up when something doesn’t make sense. Mistakes get buried instead of surfaced. Nobody is saying it out loud, but your workforce just got quieter, and not in a productive way.

This is the hidden cost of AI adoption that most organizations never measure: the erosion of psychological safety. And research now confirms it. AI isn’t just changing how people work. It’s changing whether people feel safe enough to speak up at all.

In my previous posts on AI Shame and Psychological Safety as the secret engine of AI adoption, I explored how fear and concealment quietly sabotage adoption. This post goes deeper into the mechanism underneath: that psychological safety is not only a driver but that AI can erode it, and what the latest research tells us about how to stop it.

What Psychological Safety Actually Means, and Why AI Erodes It

Amy Edmondson defines psychological safety as a shared belief that it’s safe to take interpersonal risks, such as asking questions, admitting mistakes, and challenging the status quo, without fear of embarrassment or punishment. It’s the foundation that makes teams learn, innovate, and perform under pressure. It’s one of five drivers in the Organizational Adoption Profile, and it functions as the foundation.

AI disrupts every part of that equation.

A 2025 peer-reviewed study published in Humanities and Social Sciences Communications (Nature portfolio) directly measured this relationship across organizations adopting AI. Kim, Kim and Lee (2025) studied 381 employees across three time points and found that AI adoption showed a statistically significant negative association with psychological safety. As AI use increased, employees reported feeling markedly less safe speaking up, admitting errors, or taking interpersonal risks. More troubling still, the study confirmed that psychological safety mediated the relationship between AI adoption and employee depression. AI didn’t just make people quieter, it made them unwell, and the erosion of safety was the pathway.

This isn’t a theoretical concern. It’s a measurable, documented organizational risk.

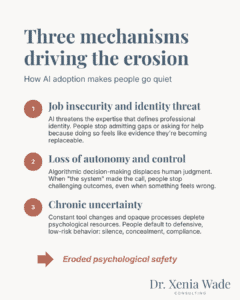

Three Mechanisms Driving the Erosion of Psychological Safety

Across the research and many conversations I had, three mechanisms consistently explain why AI makes people go quiet.

1. Job Insecurity and Identity Threat

When AI automates or “augments” cognitive work, the thinking, analyzing, and decision-making that professionals have built careers around, it doesn’t just create efficiency. It creates an existential question: Am I still needed here?

Kim, Kim, and Lee (2025) found that AI adoption erodes psychological safety by threatening employees’ sense of competence and job security. When people feel their expertise is being challenged by AI, they become less willing to take interpersonal risks, including admitting confusion or asking for help, because doing so feels like further evidence that they’re becoming replaceable.

This is an Identity Drift, the disorientation that happens when the skills and knowledge that defined your professional identity suddenly feel less valuable. Preliminary research on AI conversational agents corroborates this at the individual level: Chandra et al. (2025) found that users reported a loss of individuality and agency when AI failed to recognize their unique needs, experiencing diminished autonomy and self-expression. When identity is under threat, whether from organizational AI or personal AI interaction, people don’t take interpersonal risks. They protect themselves.

2. Loss of Autonomy and Control

When decision-making shifts to algorithms, employees perceive threats to their autonomy and expertise. Kim, Kim and Lee (2025) show that AI adoption reduces psychological safety, and when people feel less safe, they become less willing to ask questions, voice concerns, or acknowledge mistakes. After all, if “the system” made the call, who feels confident raising their hand to say they’re unsure? The tool designed to increase fairness can end up suppressing voice.

This dynamic extends beyond the workplace. Chandra et al. (2025) found that AI conversational agents giving inappropriate or context-blind advice, such as suggesting extreme actions without understanding the situation, led users to feel it was unsafe to disclose sensitive information or rely on the system in vulnerable moments. When AI systems don’t allow for challenge or nuance, people learn to disengage rather than speak up.

3. Chronic Uncertainty and Cognitive Overload

Constant changes to roles, skills, and workflows during AI adoption create what Kim, Kim and Lee (2025) describe as heightened and persistent uncertainty. Drawing on conservation of resources theory, they argue that when employees feel their psychological resources are being depleted by ongoing ambiguity, they shift into defensive, low‑risk behavior. Psychological safety drops, and with it the willingness to experiment, speak up, or take interpersonal risks.

The scale of this exhaustion is now visible in large-scale workforce data. A McKinsey Health Institute survey (2026) of over 30,000 employees across 30 countries found that one in five professionals experience burnout symptoms, including cognitive and emotional impairment, with rapid AI‑driven role changes emerging as a contributing factor (McKinsey Health Institute, 2026). Another McKinsey (2025) report found that while 71% of employees trust their employers to deploy AI ethically, half report concerns about inaccuracies and hallucinations, more than a third fear workforce displacement, and roughly a third want greater transparency into how AI makes decisions. Trust exists, but it is conditional and thin.

Meanwhile, ManpowerGroup’s 2026 workforce data revealed that while AI workplace usage rose 13% year‑over‑year, employee confidence in the technology dropped 18%, with leadership warning that a workforce intimidated by rapid AI change is more likely to see productivity decline rather than improve (ManpowerGroup, 2026).

More tools. Less confidence. That’s not a team ready to take creative risks. That’s a team in survival mode. And as I described in my post on AI Shame, survival mode doesn’t look like resistance. It looks like silence, concealment, and performative compliance.

Want to see where your organization’s real adoption barriers are? The AI Adoption Readiness Scan, built on the Organizational Adoption Profile, gives you a free, personalized read on your human readiness for AI adoption in three minutes.

The Trust Ambiguity Problem

Edmondson’s most recent work with Jayshree Seth adds a critical layer to this picture. Writing in Harvard Business Review, she describes a phenomenon she calls “trust ambiguity,” where AI errors don’t just erode trust in the technology itself, but cascade into doubt about one’s own judgment and colleagues’ reliability (Edmondson & Seth, 2026).

Here’s what makes this different from previous waves of workplace technology: when a human colleague makes an error, teams typically rally around the problem. They discuss it, learn from it, and move forward. When AI makes an error, the response is often expanding, directionless doubt. People aren’t sure what went wrong, whether it will happen again, or whose job it is to catch it. That ambiguity inhibits exactly the behaviors that define psychological safety: speaking up, questioning, and learning.

The World Economic Forum’s 2026 analysis reinforces this in several of its scenarios, especially those where AI advances faster than workforce readiness. The Four Futures for Jobs in the New Economy report shows that skills gaps persist even as organizations invest in training, because low‑safety cultures discourage employees from questioning AI outputs, experimenting with new approaches, or reporting errors. In several of the WEF’s scenarios, especially those where AI advances faster than workforce readiness, unchallenged AI decisions compound operational risk, particularly in safety‑critical sectors (World Economic Forum, 2026). Psychological safety isn’t a cultural luxury. It is a performance and safety lever.

The Broader Safety Landscape: AI Beyond the Workplace

The erosion of psychological safety isn’t limited to organizational settings. Emerging research on generative AI mental health chatbots has begun documenting similar risks. A recent scoping review protocol highlighted widespread concerns, including misinformation, inappropriate or harmful responses, unpredictable crisis behavior, and violations of privacy – all of which undermine trust and discourage users from disclosing sensitive information (Olisaeloka, Richardson & Vigo, 2026). While this research is still in its early stages, the direction is clear.

Stanford’s Human‑Centered AI Institute has also warned that many AI mental‑health tools fall short of core ethical expectations, including avoiding harm, preventing stigma, and responding appropriately in safety‑critical situations, which further undermines users’ sense of psychological safety (Stanford HAI, 2025). Across both workplace and personal contexts, the pattern is consistent: when AI systems are opaque and unpredictable, people protect themselves by withdrawing, staying silent, or disengaging.

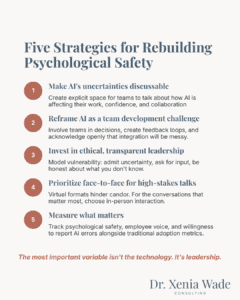

What Actually Works: Rebuilding Psychological Safety in the Age of AI

The research doesn’t just document the problem. It points toward solutions. And the good news is that the most important variable isn’t the technology. It’s leadership.

Make AI’s Uncertainties Discussable

The single most powerful intervention is deceptively simple: create explicit space for people to talk about how AI is affecting their work, their confidence, and their collaboration. Edmondson and Seth (2026) recommend specific questions like “How is AI affecting your collaboration?” or “Where are you uncertain about AI’s role in your decisions?” These aren’t soft questions. They’re diagnostic instruments that surface trust issues before they calcify into silence.

I’ve found that most leaders assume their teams are “fine” with AI adoption because nobody is complaining. But silence isn’t agreement. It’s often the first symptom of Silent Resistance. You have to actively invite the conversation.

Reframe AI Integration as a Team Development Challenge

Stop treating AI rollouts as pure technology deployment. Every new AI tool changes team dynamics, role expectations, and collaboration patterns. That makes it a team development challenge, and it requires the same intentional leadership you’d bring to any major team transition.

This means involving teams in decisions about how AI tools are used, creating feedback loops so people can flag what’s working and what isn’t, and acknowledging openly that the integration process will be messy. The messiness isn’t a failure of implementation. It’s the reality of human beings adapting to fundamental changes in how they work.

Invest in Ethical, Transparent Leadership

Kim, Kim and Lee (2025) found one critical moderator: ethical leadership significantly buffered the negative impact of AI adoption on psychological safety. In teams with leaders who demonstrated transparency, fairness, and genuine concern for employee well-being, the drop in psychological safety was meaningfully smaller.

What does this look like in practice? It means being honest about what you don’t know about the AI tools you’re deploying. It means acknowledging job insecurity concerns directly instead of dismissing them with reassurances that may not hold. It means modeling the vulnerability you want from your team: admitting when you’re uncertain, asking for input, being transparent about decision-making. As I wrote in my previous post, leaders who say “I don’t know, let’s try it” give their teams permission to experiment.

Prioritize Face-to-Face Interaction for High-Stakes Conversations

In remote and hybrid environments, AI-related anxieties are harder to detect and easier to ignore. Edmondson and Seth (2026) specifically flag that virtual formats already hinder candor, and layering AI uncertainty on top makes it worse. For the conversations that matter most, discussing how AI is changing roles, surfacing concerns, processing the emotional impact of rapid change, prioritize in-person or high-bandwidth interaction.

Measure What Matters

You can’t manage what you don’t measure. Most organizations track AI adoption rates, efficiency gains, and cost savings. Almost none systematically measure psychological safety, employee voice, or willingness to report AI-related errors. Add these to your dashboard. Pulse surveys, team retrospectives, and structured check-ins can surface the signals you’re currently missing.

This is exactly what the Organizational Adoption Profile is designed to do: measure the emotional, cultural, and psychological dimensions of AI readiness that traditional metrics miss entirely.

→ Where does your organization stand on the five drivers? Take the free diagnostic to find out.

The Opportunity Hidden in the Challenge

Here’s what I want CHRO and People & Culture leaders to take away from this: the organizations that get AI adoption right won’t be the ones with the most sophisticated technology. They’ll be the ones that maintain, and even strengthen, the human conditions that make good work possible.

Psychological safety is the infrastructure that allows your people to learn new tools, flag problems early, collaborate across disciplines, and adapt to change without shutting down. Without it, even the best AI implementation will underperform, because the humans in the loop won’t be fully engaged.

The evidence is now clear. AI adoption, left unmanaged, erodes the psychological safety your organization needs to thrive. But with intentional, ethical leadership, that erosion isn’t inevitable. The question isn’t whether AI will change your team’s dynamics. It’s whether your leaders are prepared to manage that change with the same rigor they bring to the technology itself.

Before your next AI initiative, ask your team one question: “What’s the worst thing that could happen if you tried this and it didn’t work?”

Listen to the answers. If you hear “I’d learn something,” you have psychological safety. If you hear silence, nervous laughter, or “I’d get in trouble,” you have your diagnosis.

Frequently Asked Questions About AI and Psychological Safety

AI erodes psychological safety through three primary mechanisms: it triggers job insecurity and identity threat as employees question their relevance, it shifts decision-making authority to algorithms which reduces employees’ sense of autonomy and voice, and it creates chronic uncertainty through constantly changing tools and opaque processes that push people into defensive, low-risk behavior. A 2025 study showed a statistically significant negative relationship between organizational AI adoption and employee psychological safety (Kim, Kim & Lee, 2025).

The strongest research evidence linking AI to psychological safety comes from Kim, Kim and Lee’s (2025) peer‑reviewed, three‑wave study of 381 employees, published in Humanities and Social Sciences Communications. They found that organizational AI adoption significantly reduced psychological safety, and that this reduction in safety mediated increased depressive symptoms. Large‑scale workforce surveys from McKinsey, ManpowerGroup, and the World Economic Forum echo these patterns, reporting AI‑related burnout, declining confidence, and inhibited voice behaviors as employees navigate rapid technological change.

Research points to several evidence-based strategies: making AI’s uncertainties explicitly discussable in team settings (Edmondson & Seth, 2026), reframing AI integration as a team development challenge, investing in ethical and transparent leadership, which has been shown to buffer safety erosion (Kim, Kim & Lee, 2025), prioritizing high-bandwidth communication for sensitive conversations, and measuring psychological safety alongside traditional adoption metrics.

Trust ambiguity, described by Edmondson and Seth (2026) in Harvard Business Review, refers to how AI errors cascade into expanding, directionless doubt, not just about the technology, but about one’s own judgment and colleagues’ reliability. Unlike human errors that teams can collaboratively resolve, AI mistakes create uncertainty without clear resolution pathways, inhibiting the speaking-up behaviors central to psychological safety.

Kim, Kim and Lee (2025) found that ethical leadership significantly buffered the negative impact of AI adoption on psychological safety. Leaders who demonstrated transparency, fairness, and genuine concern for employee well-being helped their teams maintain openness and voice even as AI changed workplace dynamics, making leadership development a critical component of AI implementation strategy.

Psychological Safety is one of five drivers in the Organizational Adoption Profile, alongside Adaptability Mindset, Empowerment Orientation, Action Style, and Adoption Capacity. It functions as the foundation: without psychological safety, the other four drivers cannot activate. You can measure all five through the AI Adoption Readiness Diagnostic.

Dr. Xenia Wade specializes in Human-Centered AI Change, helping organizations build the emotional and cultural readiness their people need to actually adopt AI. With a PhD in Human Resource Management and experience across enterprise-scale organizational transformations, she focuses on the human side of AI at work, the fears, the identity shifts, and the invisible barriers that no productivity dashboard can capture.

Follow Dr. Xenia Wade on LinkedIn.

Want to know how psychological safe your organization is? Take the free diagnostic →

Related concepts: Identity Drift | Silent Resistance | Emotional Carrying Capacity | Human Overload

Sources

- Kim, B. J., Kim, M. J., & Lee, J. (2025). “The dark side of artificial intelligence adoption: Linking AI adoption to employee depression via psychological safety and ethical leadership.” Humanities and Social Sciences Communications, 12, Article 704.

- Edmondson, A. C. & Seth, J. (2026, February). “How to Foster Psychological Safety When AI Erodes Trust on Your Team.” Harvard Business Review.

- Chandra, M., Naik, S., Ford, D., Okoli, E., De Choudhury, M., Ershadi, M., … & Suh, J. (2025, June). From lived experience to insight: unpacking the psychological risks of using ai conversational agents. In Proceedings of the 2025 ACM Conference on Fairness, Accountability, and Transparency (pp. 975-1004).

- Olisaeloka, L., Richardson, C., & Vigo, D. (2026). “User experience and safety of generative AI-based mental health chatbots: Scoping review protocol.” PLOS ONE.

- Stanford Institute for Human-Centered Artificial Intelligence. (2025). “Exploring the Dangers of AI in Mental Health Care.” Stanford University.

- McKinsey. (2026). “The Human Skills You Will Need to Thrive in 2026’s AI-Driven Workplace.”

- McKinsey. (2025). “AI in the workplace: A report for 2025.”

- ManpowerGroup. (2026). Global Talent Barometer 2026: AI Use Accelerates as Worker Confidence Falls and “Job Hugging” Takes Hold

- World Economic Forum. (2026). Four Futures for Jobs in the New Economy: AI and Talent in 2030.