By Dr. Xenia Wade | The Human Side of AI at Work

Your organization just invested in AI tools. The training is done. The licenses are active. And adoption is stalling anyway.

Here’s what nobody tells you: the problem isn’t your technology, your budget, or your people’s technical skills. It’s that your employees don’t feel safe enough to use it.

Psychological safety, i.e. the belief that you won’t be punished or humiliated for speaking up, asking questions, or making mistakes, is the single biggest predictor of whether your AI adoption will succeed or quietly collapse. And in most organizations rolling out AI right now, it’s almost entirely absent from the strategy.

The AI Anxiety Gap Your Dashboard Can’t See

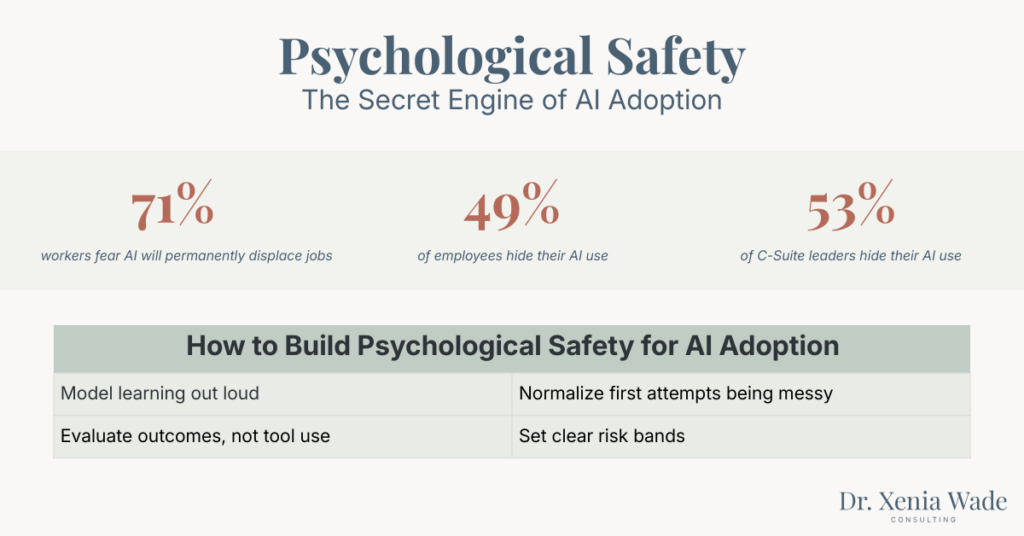

The fear is real, and it’s widespread. A 2025 Reuters/Ipsos poll found that 71% of U.S. workers are concerned that AI will lead to permanent job displacement. An EY study found that 75% of employees believe AI will make certain job roles completely obsolete.

These aren’t the opinions of a resistant minority. This is the majority of your workforce quietly questioning whether their skills, their experience, and their role still matter.

And the anxiety doesn’t stay abstract. It changes behavior. As I wrote in my previous post on AI Shame, 49% of employees are already concealing their AI use at work. They’re not resisting the technology, they’re hiding from the judgment that comes with using it.

What makes this worse is that the anxiety isn’t limited to junior employees. It runs straight to the top. The same WalkMe data I referenced in my AI Shame research shows that 53% of C-suite leaders also hide their AI use. The people setting the strategy are experiencing the same fear as the people executing it, – they’re just better at concealing it.

This is what I call the AI Anxiety Gap: the distance between what leadership thinks is happening with adoption and what employees are actually experiencing emotionally. Your dashboard shows license activations. It doesn’t show the fear underneath.

Why Training Alone Will Never Close the Gap

Most AI adoption strategies follow the same playbook: select tools, train people, measure usage. It’s logical. It’s also incomplete.

Research from Harvard Business School by Amy Edmondson who coined the term psychological safety shows that team learning requires the belief that interpersonal risk-taking is safe. Without it, people don’t ask questions, don’t admit confusion, and don’t experiment.

AI adoption is nothing but risk-taking. Every time an employee uses an AI tool, they’re exposing what they don’t know. They’re risking looking incompetent. They’re potentially revealing that a machine can do part of their job faster than they can.

A 2025 study published in Humanities and Social Sciences Communications (Kim, Kim & Lee) studied 381 employees across three time points and found a direct chain: AI adoption erodes psychological safety, and reduced psychological safety increases depression. The relationship isn’t theoretical, it’s measurable and sequential.

The study also found that ethical leadership moderates the damage. When leaders demonstrate transparency, fairness, and genuine concern during AI transitions, the negative impact on psychological safety is significantly reduced.

In other words: the technology doesn’t cause the problem. The absence of safety around it does.

What Unsafe AI Adoption Actually Looks Like

In organizations without psychological safety, AI adoption follows a predictable pattern:

Shadow AI. Employees experiment in secret because they’re afraid of getting it wrong publicly. They use unapproved tools. They don’t share what they learn. The organization gets none of the benefit.

Performative compliance. People attend the training. They log into the platform. They never actually use it for real work. Adoption metrics look fine. Real adoption is near zero.

Innovation paralysis. Nobody wants to be the first to try something that might fail. Teams wait for someone else to go first, and nobody does. Meanwhile, competitors are learning at speed.

Blame culture escalation. When early AI experiments produce imperfect results (which they will), the reaction in low-safety environments is punishment and not learning. This teaches everyone else to stay away. One failed experiment becomes a cautionary tale that circulates for months.

The pattern compounds. Each of these behaviors reinforces the others, creating a silent resistance loop: anxiety drives hiding, hiding prevents shared learning, lack of learning reinforces anxiety, and the cycle accelerates. From the outside, it looks like a training problem. From the inside, it’s a safety crisis.

As Erica Farmer wrote in Training Journal: “AI thrives on experimentation, and experimentation only happens in safe environments. If people don’t feel they can fail safely, they won’t learn at all.”

This is the exact dynamic I describe through the Organizational Adoption Profile. One of its five drivers, Psychological Safety, determines whether the other four (Adaptability Mindset, Empowerment Orientation, Action Style, and Adoption Capacity) can function at all. Without safety, adaptability has no room to grow. Without safety, empowerment is just a word on a slide.

→ Where does your organization stand on the five drivers? Take the free diagnostic to find out.

What Leaders Must Do Differently

The solution isn’t another change management framework. It’s a fundamental shift in how leaders show up during AI transitions.

Redefine Total Compensation

Today’s employees aren’t just evaluating salary. They’re evaluating whether their organization feels safe during disruption. As Madison Davis put it: Total Compensation = Salary + Safety + Skills. If your people don’t feel psychologically safe and invested in, they’ll leave for organizations that provide both, regardless of what you pay them.

Model Learning Out Loud

The old leadership model, the expert who always has the answers, is obsolete in an AI-enabled organization. Tools evolve faster than any one person can master. Leaders who say “I don’t know, let’s try it” or “That prompt didn’t work, what should we change?” give their teams permission to experiment.

This isn’t weakness. It’s the new credibility. And research backs it up: Edmondson’s work consistently shows that teams with psychologically safe leaders run significantly more experiments. In AI adoption, experiments equal learning, and learning velocity determines competitive advantage.

Frame AI as a Learning Journey, Not an Implementation

If your AI strategy sounds like a rollout plan, you’ve already lost your people. Position AI as something to explore, not something to comply with. Create low-pressure opportunities for experimentation: “AI curiosity sessions,” peer learning circles, or structured time to test tools without performance pressure.

The Deloitte 2025 survey found that 88% of executives report their organizations are actively communicating ethical AI practices but workshops and ongoing training were rated the most effective trust-building methods. Communication alone isn’t enough. People need to experience safety, not just hear about it.

Set Clear Boundaries That Free People Up

Nothing kills psychological safety faster than ambiguity. If people don’t know what’s acceptable, they either overuse AI recklessly or avoid it entirely out of fear. Create explicit risk bands: what’s expected (green zone), what needs review (yellow zone), and what requires approval (red zone). When people know where experimentation is encouraged and protected, they actually do it.

The Competitive Reality

Teams with high psychological safety learn faster. In AI adoption, learning speed is the competitive advantage, not which tool you picked or how much you spent on licenses.

Your competitors face identical choices right now: build psychological safety into their AI strategy, or watch their best people either burn out from chronic anxiety or leave for organizations that feel safer.

A 2024 bibliometric and systematic review in Frontiers in Artificial Intelligence (Soulami, Benchekroun & Galiulina) synthesized studies on AI and employee well‑being and concluded that organizational strategies are needed to mitigate adverse effects of AI while leveraging its potential to enhance autonomy, satisfaction, and AI‑enabled innovation.

The technology is the easy part. Making people feel safe enough to use it is the real transformation. And the organizations that get this right won’t just adopt AI faster, they’ll retain the people who know how to use it. Because employees don’t leave bad tools. They leave environments where they feel unsafe to learn.

Start Here: One Question That Changes Everything

Before your next AI initiative, ask your team one question: “What’s the worst thing that could happen if you tried this and it didn’t work?”

Listen to the answers. If you hear “I’d learn something”, you have psychological safety. If you hear silence, nervous laughter, or “I’d get in trouble”, you have your diagnosis.

AI adoption isn’t a technology problem. It’s a psychological safety problem. And the organizations that understand this will be the ones still standing when the dust settles.

Want to know exactly where your organization’s adoption barriers are – emotional, cultural, and practical? Take the free AI Adoption Readiness Diagnostic →

Frequently Asked Questions About Psychological Safety and AI Adoption

What is psychological safety in the context of AI adoption? Psychological safety is the shared belief that it’s safe to take interpersonal risks, like asking questions, admitting confusion, or making mistakes, without facing punishment or humiliation. In AI adoption, this means employees feel safe to experiment with AI tools, share what they learn, and acknowledge when something doesn’t work, without fear of being judged as incompetent or replaceable.

Why does psychological safety matter more than training for AI adoption? Training gives people skills, but psychological safety gives people permission to use them. Research shows that without safety, employees won’t experiment, ask for help, or admit confusion, all behaviors essential to learning a new technology. Organizations can invest heavily in AI training and still see near-zero real adoption if the cultural environment punishes mistakes.

How does AI adoption affect employee mental health? A 2025 study of 381 employees (Kim, Kim & Lee) found that AI adoption directly reduces psychological safety, which in turn increases depression. The mechanism is sequential: the uncertainty created by AI threatens employees’ sense of security, and without protective leadership and culture, that insecurity escalates into measurable mental health impacts.

What is the AI Anxiety Gap? The AI Anxiety Gap is the distance between what leadership believes is happening with AI adoption (based on dashboards, license counts, and training completion rates) and what employees are actually experiencing emotionally (fear, confusion, identity threat). Most organizations dramatically underestimate this gap because traditional adoption metrics don’t capture emotional readiness.

What can leaders do to build psychological safety during AI transitions? Leaders can model vulnerability by experimenting with AI publicly and sharing failures, reframe AI as a learning journey rather than a mandate, set clear boundaries about where experimentation is encouraged, and evaluate work quality rather than tool choice. The most important shift is moving from “expert who has all the answers” to “learner who asks good questions.”

How is psychological safety connected to the Organizational Adoption Profile? Psychological Safety is one of five drivers in the Organizational Adoption Profile, alongside Adaptability Mindset, Empowerment Orientation, Action Style, and Adoption Capacity. It functions as the foundation, without psychological safety, the other four drivers cannot activate. You can measure all five through the AI Adoption Readiness Diagnostic that I offer.

Sources

- Lange, J. & Alper, A. (2025). “Americans Fear AI Permanently Displacing Workers.” Reuters/Ipsos, August 2025.

- EY Americas (2023). “New EY Research Reveals the Majority of US Employees Feel AI Anxiety amid Explosive Adoption.” December 2023.

- Edmondson, A. (1999). “Psychological Safety and Learning Behavior in Work Teams.” Administrative Science Quarterly, 44(2), 350-383.

- Kim, B.J., Kim, M.J. & Lee, J. (2025). “The dark side of artificial intelligence adoption: linking AI adoption to employee depression via psychological safety and ethical leadership.” Humanities and Social Sciences Communications, 12, Article 704.

- Deloitte (2025). “Preparing the Workforce for Ethical and Trustworthy AI.”

- Farmer, E. (2025). “Psychological safety is the missing piece in your AI strategy.” Training Journal, November 2025.

- Soulami, M., Benchekroun, S. & Galiulina, A. (2024). “Exploring how AI adoption in the workplace affects employees: a bibliometric and systematic review.” Frontiers in Artificial Intelligence, 7, 1473872.

- WalkMe (2025). “AI in the Workplace Survey.” [Referenced via AI Shame article]